Dark Spider was one of my first dark net projects which pushed the envelop of thinking outside the box.

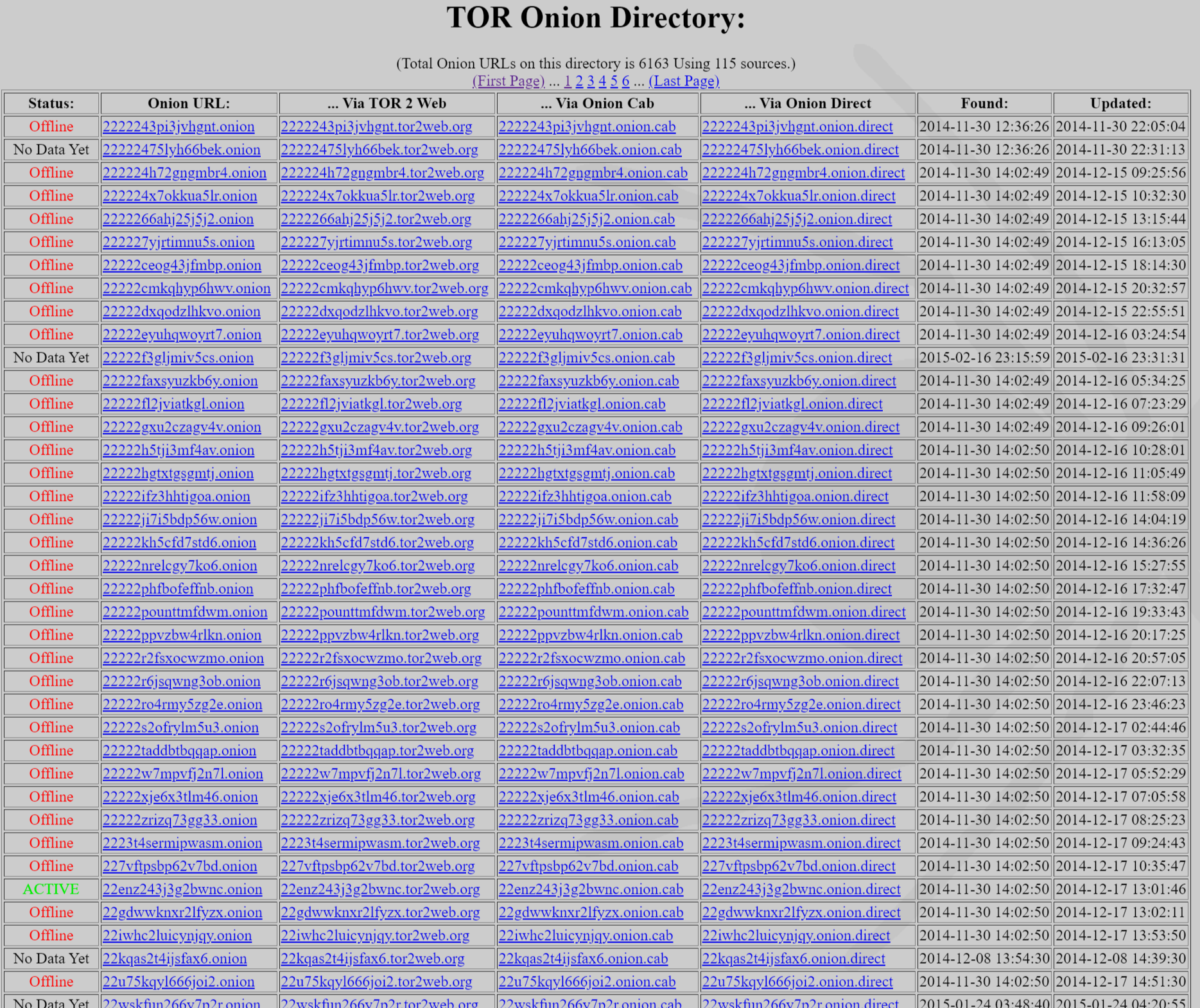

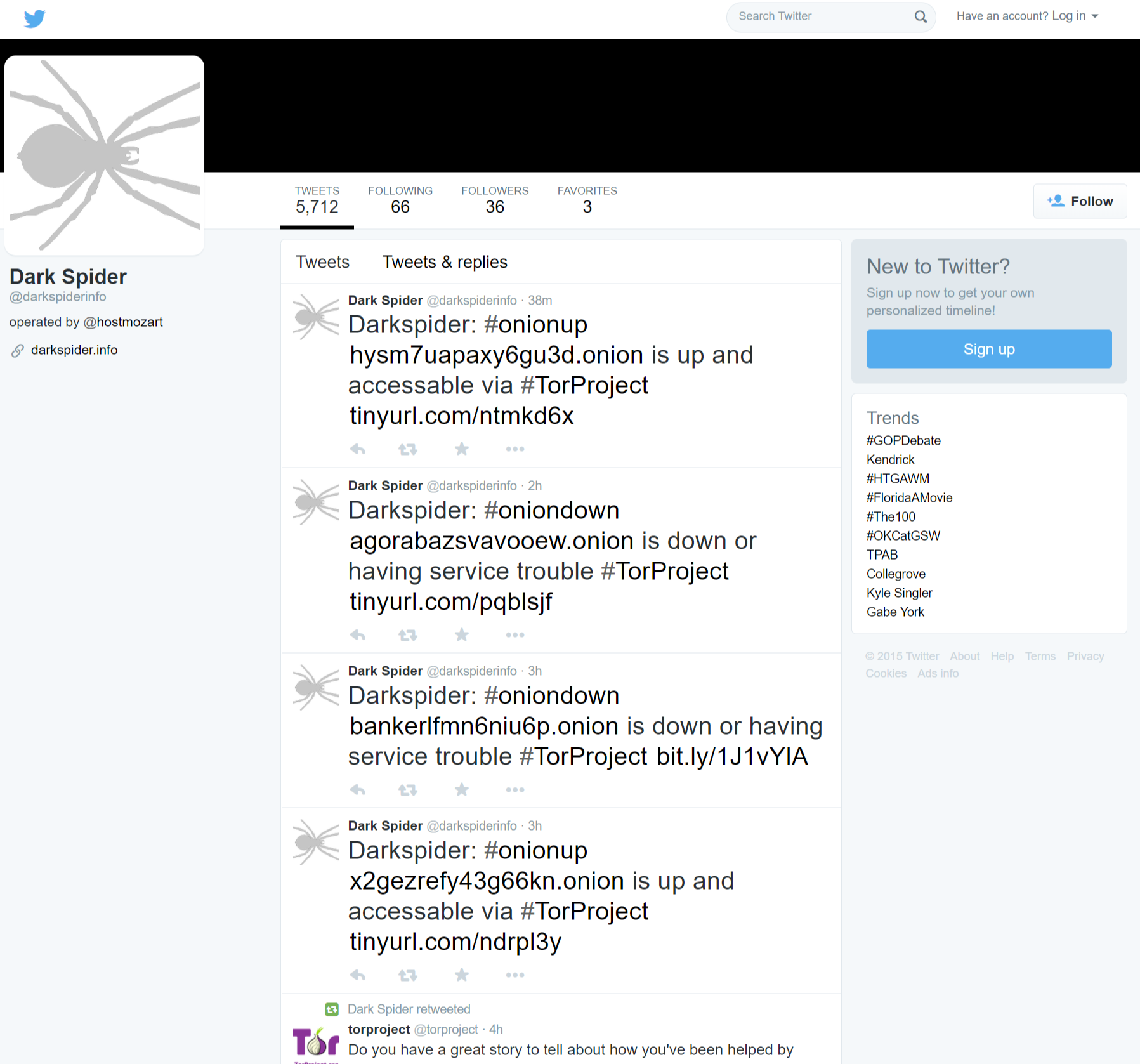

It started with a basic idea of creating an index or directory of all the known Onion URLs on the TOR network that could be found, either via the onion indexes themselves, public websites, or even via the found sites already in the list.

The next step was to gather as much information from the found sites themselves. This included complete hashes of the generated source codes, creation dates, changes, and even hashes of the images found on the sites.

The spider was able to crawl multiple onions and nodes simultaneously, and compare data between the server responses on nodes with the TOR output.

Dark Spider became the base of other projects that was used for the same client.